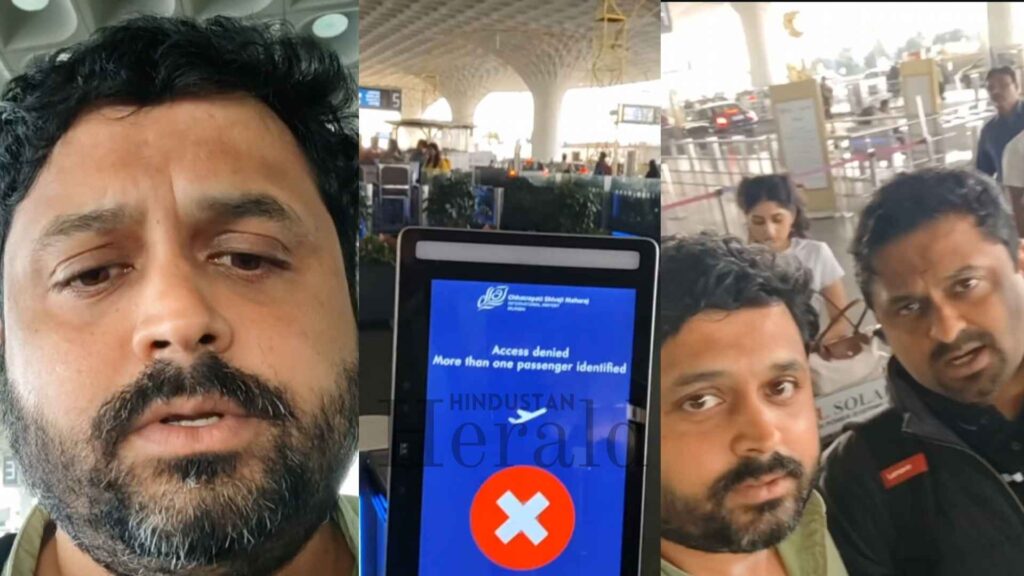

Mumbai, January 8: For a system sold on speed, convenience, and frictionless travel, the moment was jarringly awkward. Two brothers stood at the DigiYatra gate inside Chhatrapati Shivaji Maharaj International Airport, tickets booked, faces registered, expecting the usual green light. Instead, the screen flashed red. Access denied. The reason followed, blunt and almost comic in its phrasing: more than one person found with the same face.

The brothers were identical twins.

What might have remained a forgettable delay became a viral talking point after one of them filmed the encounter and posted it online. Between January 4 and 7, the clip ricocheted across platforms, drawing millions of views and sparking a wider conversation about how far India’s biometric ambitions have come and where they still stumble.

A Routine Airport Entry That Was Anything But

The twins, identified as Prashant Menon and his brother, were not testing the system out of curiosity. Both were registered users of DigiYatra, the government-backed facial recognition platform now operational at several major Indian airports. They had separate tickets, separate identities, and no expectation of trouble.

Yet as they approached the facial recognition gate at Mumbai airport, the software froze them out. No quiet alert to airport staff. No polite prompt to step aside. Just a public rejection.

In the video, one brother narrates the scene with disbelief, holding his phone up to the gate’s display. His tone is amused at first, then puzzled. “See the magic of DigiYatra,” he says, pointing to the message on the screen.

Airport personnel soon stepped in, directing both men toward manual verification counters. Their documents were checked the old-fashioned way. They were cleared to travel and, by all accounts, did not miss their flight.

But the damage, at least online, was already done.

Why This One Clip Struck A Nerve

DigiYatra has been pitched as a quiet revolution in Indian air travel. No repeated ID checks. No boarding pass juggling. Just walk, scan, and go.

That promise has found an eager audience, particularly among frequent flyers tired of queues. Which is precisely why this incident landed the way it did. Watching the system stumble over something as basic as identical twins made many wonder how robust it really is.

Social media reactions ranged from jokes about Bollywood twins confusing machines to more serious concerns about reliability and oversight. Some users asked whether facial recognition could be trusted at scale. Others shrugged, pointing out that identical twins confuse humans, too.

Still, the optics mattered. An automated gate saying “access denied” at one of India’s busiest airports is not the image DigiYatra wants circulating.

What DigiYatra Said And What It Meant

The response from DigiYatra Foundation came quickly.

Officials clarified that the incident was neither a system crash nor a data error. According to them, this was a known edge case. Identical twins, or people with extremely close facial resemblance, can trigger a safeguard within the system. When the software detects overlapping biometric markers, it refuses automated clearance rather than risk matching a passenger to the wrong ticket.

In plain terms, the system chose caution over convenience.

The Foundation also stressed that DigiYatra does not replace security or boarding protocols. It sits alongside them. When the technology hesitates, manual verification takes over. Passengers are not denied boarding. They are simply asked to prove who they are, the way travellers have always done.

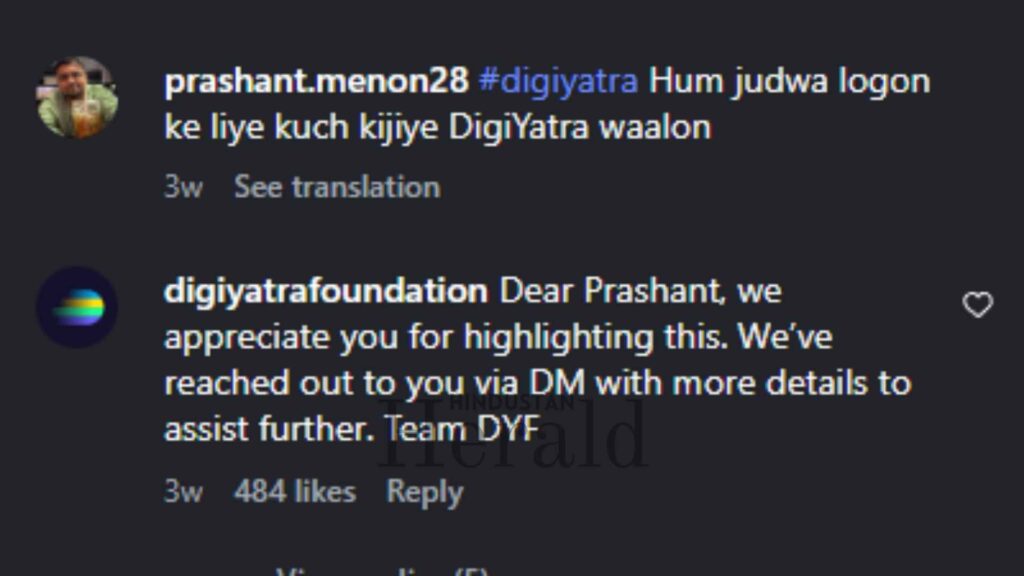

The organisation even reached out to the brothers online, offering assistance and reiterating that such incidents are rare.

How The Technology Sees Faces

Facial recognition systems do not look at faces the way humans do. They measure distances, angles, contours, and patterns. In DigiYatra’s case, each face is mapped to a specific journey. One face, one ticket.

That design choice is meant to prevent misuse. No one should be able to walk through on someone else’s booking, even if they look similar. When two nearly identical faces appear in the system at the same time, the algorithm faces a dilemma. Pick one and risk a security breach, or block both and call for human judgment.

It chose the second.

Experts quoted in multiple reports pointed out that this behaviour is intentional. In security-sensitive environments like airports, false positives are dangerous. A false negative, while inconvenient, is safer.

From that angle, the system did exactly what it was designed to do.

Flaw Or Fair Trade-Off

This distinction has not stopped debate.

Critics argue that if a national biometric platform cannot smoothly handle identical twins, its limitations should be more openly acknowledged. India is not a small, homogeneous population. Edge cases here number in the millions.

Others counter that no biometric system anywhere in the world claims perfection. Airports in the United States, Europe, and East Asia have reported similar issues with twins and close relatives. The difference, they say, is that those incidents rarely go viral.

There is also the question of expectation. DigiYatra’s messaging has leaned heavily on seamlessness. Incidents like this expose the gap between promise and practice.

Beyond The Gate, A Question Of Trust

The episode has also nudged broader concerns back into public view. Facial recognition systems require trust. Trust that data is secure. Trust that errors are handled fairly. Trust that humans remain in the loop.

For many passengers, this was the first visible reminder that DigiYatra is not magic. It is software, built by humans, operating within defined limits.

Privacy advocates have seized on the moment to call for clearer communication. If certain users are more likely to face issues, such as identical twins, that should be explained upfront. Not discovered at a boarding gate with a phone camera rolling.

What Changes, If Anything, After This

There is no indication that DigiYatra will roll back or pause its expansion. Passenger adoption remains strong, and airport authorities continue to back the system as a solution to crowd management.

What may change is refinement. Experts suggest future versions could incorporate secondary checks for known problem cases, perhaps flagging twins in advance or prompting additional verification before travellers reach the gate.

For now, the advice from airport officials is simple. Even if you use DigiYatra, carry your physical ID. Technology can speed things up. It cannot replace preparedness.

A Small Moment In A Bigger Shift

In the end, this was a minor delay for two passengers and a brief inconvenience in an otherwise routine journey. Yet it captured something larger.

India is moving fast toward automation. Airports are one of the most visible testing grounds for that push. When systems work, they fade into the background. When they fail, even briefly, they force a pause.

The twins walked away, flights boarded, story told. DigiYatra walked away too, still standing, but slightly more human in the public eye. Efficient, ambitious, and, as it turns out, capable of being confused by brothers who look exactly alike.

For now, that seems like a reasonable reminder of where technology stands. Powerful, useful, but not infallible.

Stay ahead with Hindustan Herald — bringing you trusted news, sharp analysis, and stories that matter across Politics, Business, Technology, Sports, Entertainment, Lifestyle, and more.

Connect with us on Facebook, Instagram, X (Twitter), LinkedIn, YouTube, and join our Telegram community @hindustanherald for real-time updates.

Tech writer passionate about AI, startups, and the digital economy, blending industry insights with storytelling.

Covers Indian politics, governance, and policy developments with over a decade of experience in political reporting.